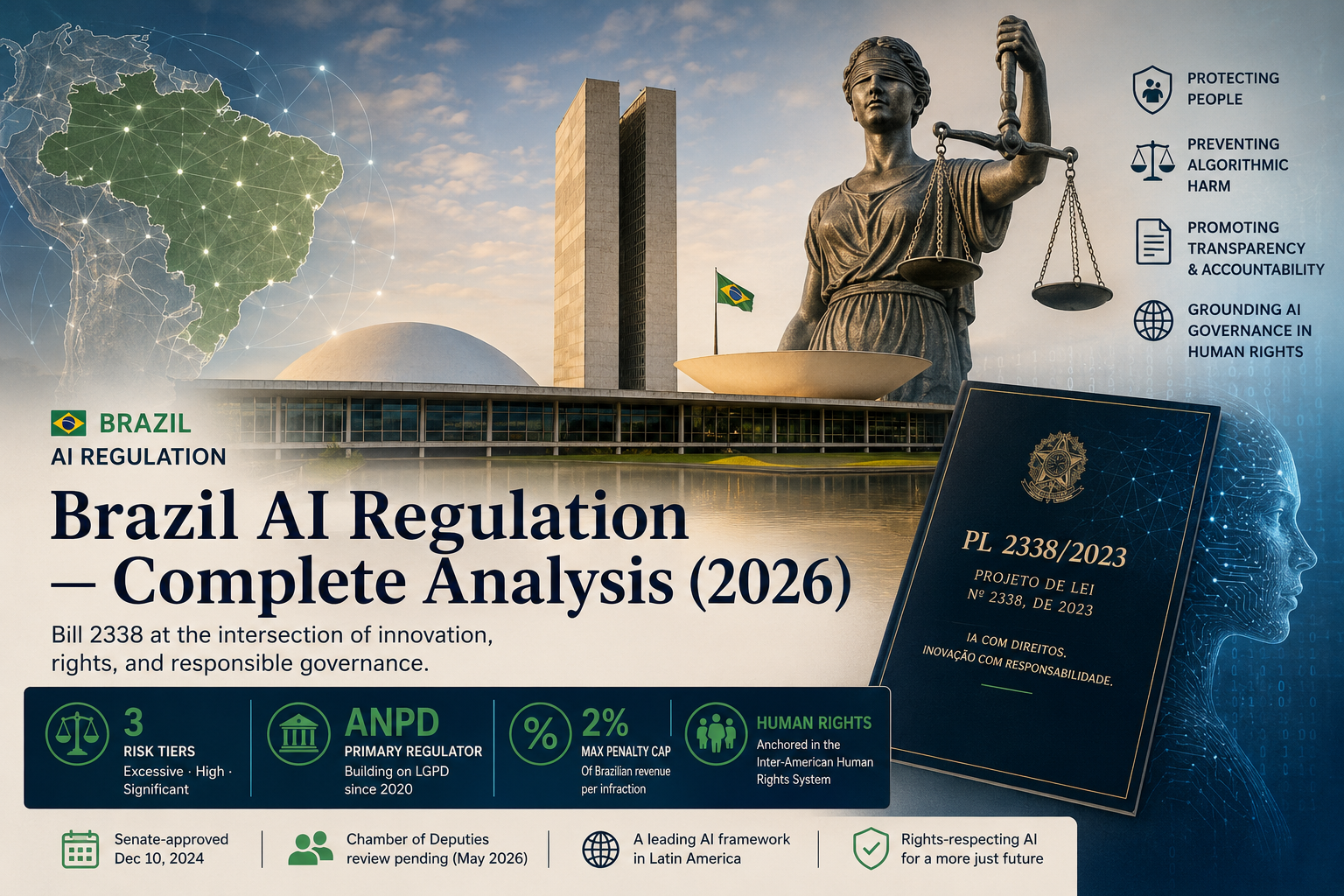

Brazil’s AI Bill 2338 explained — risk classification, ANPD oversight, Inter-American HR System implications, and how it compares to the EU AI Act, as of May 2026.

What Is Brazil’s AI Bill 2338?

Brazil’s AI Bill 2338 is a comprehensive legislative framework establishing principles, rights, and obligations for the development and deployment of artificial intelligence systems in Brazil. Filed in the Federal Senate on May 3, 2023 by then–Senate President Rodrigo Pacheco, the bill is formally titled Projeto de Lei nº 2338, de 2023 and is referenced in Brazilian legal commentary as PL 2338/2023 or simply Bill 2338.

The bill is the first comprehensive AI regulation proposed in Latin America to formally cross-reference Inter-American Human Rights System obligations in its operational provisions. This positioning is not incidental. Brazil, as a State Party to the American Convention on Human Rights and a country that has accepted the contentious jurisdiction of the Inter-American Court, faces treaty-body exposure for State actions — including AI deployments by State actors — that the EU AI Act does not impose on European Member States in the same form.

In 2024, Senator Eduardo Gomes was appointed rapporteur (relator) for the bill and drafted the substitute text (substitutivo) that consolidated input from over a year of public hearings, civil society contributions, and sectoral consultations. The substitute text — not the original 2023 draft — is the version that received Senate approval and is now the operative legislative artifact.

Current Status (May 2026)

As of May 2026, Bill 2338 has cleared Senate approval but remains pending in the Chamber of Deputies, where amendments are expected to address civil society concerns regarding biometric surveillance carve-outs.

Image placeholder — Congresso Nacional, BrasíliaBrazil’s Federal Senate approved AI Bill 2338 in plenary on December 10, 2024.Photo: Unsplash (placeholder).

The legislative progression to date:

- May 3, 2023PL 2338/2023 introduced in the Federal Senate by Senator Rodrigo Pacheco

- 2024Senator Eduardo Gomes appointed rapporteur; substitute text drafted following public consultations

- Late 2024Internal Affairs Committee (CCJ) approves the substitute text

- December 10, 2024Federal Senate plenary approves Bill 2338, sending it to the Chamber of Deputies

- 2025Chamber of Deputies committee review begins

- May 2026Pending in Chamber of Deputies (current status)

- FutureChamber vote → potential return to Senate if amended → presidential signature → phased effective dates

The Chamber’s review has surfaced amendments concerning biometric identification for public security purposes, transparency obligations for foundation models, and the relationship between Bill 2338 and Brazil’s existing data protection law. None of these have yet produced a vote.

How Brazil Classifies AI Risk

Brazil’s AI Bill 2338 establishes a three-tier risk classification framework distinct from the EU AI Act’s approach. Three risk tiers structure Brazil’s AI framework: excessive-risk systems banned outright, high-risk systems requiring formal impact assessments, and significant-risk systems subject to transparency obligations — a subject-based classification rather than the use-case approach of the EU AI Act.

- Excessive Risk (Prohibited)Systems classified as posing excessive risk (risco excessivo) are prohibited entirely under the substitute text. The category includes social scoring systems operated by public authorities, real-time biometric identification in public spaces (with narrow law enforcement exceptions actively under amendment), and AI systems designed to exploit vulnerabilities of specific demographic groups.The carve-out scope for biometric identification has been the most contested provision during Chamber review. Civil society organizations have argued that even the narrow law-enforcement exception conflicts with Articles 11 and 13 of the American Convention on Human Rights as interpreted by recent Inter-American Court advisory opinions.

- High Risk (Algorithmic Impact Assessment Required)Systems classified as high risk (alto risco) require an algorithmic impact assessment (avaliação de impacto algorítmico) before deployment, plus ongoing monitoring obligations. The category captures AI systems used in: credit scoring, hiring and HR decisions, educational evaluation, criminal justice, public service eligibility, and critical infrastructure management.The impact assessment requirement is operationally substantial. It must document the system’s purpose, training data provenance, performance across demographic groups, and risk mitigation measures — a documentation burden comparable to EU AI Act Article 27 conformity assessments but with explicit human rights framing.

- Significant Risk (Transparency Obligations)Systems classified as significant risk face transparency obligations rather than impact assessment requirements. Users must be informed when interacting with AI systems, and providers must publish accessible documentation of system capabilities and limitations. This tier covers most consumer-facing AI applications that don’t fall into the prohibited or high-risk categories.

Brazil vs EU AI Act — Key Differences

Brazil’s 2% of local revenue penalty cap contrasts with the EU AI Act’s 7% of global revenue ceiling — a deliberate calibration to local market conditions that may understate the practical financial exposure for multinational deployments.

| Dimension | EU AI Act | Brazil AI Bill 2338 |

|---|---|---|

| Risk classification | Use-case based (prohibited / high-risk / limited-risk / minimal-risk) | Subject-based (excessive risk / high risk / significant risk) |

| Impact assessment | Required for high-risk systems (Article 27) | Required for high-risk systems with affected-population focus |

| Enforcement | National authorities + EU AI Office (Article 64) | ANPD as primary regulator + multi-stakeholder governance |

| Maximum penalty | €35M or 7% global turnover (Article 99) | R$50M per infraction or 2% Brazilian revenue (Article 36) |

| Extraterritorial scope | Providers placing AI on EU market (Article 2) | AI processing affecting individuals in Brazil |

| Status (May 2026) | In force; staged applicability through August 2026 | Senate-approved Dec 10, 2024; Chamber pending |

| Human rights anchor | EU Charter of Fundamental Rights | American Convention on Human Rights cross-references |

Source: Regulation (EU) 2024/1689; PL 2338/2023 substitute text.

The penalty calibration is worth dwelling on. The EU AI Act sets maximum penalties at €35 million or 7% of total worldwide annual turnover — whichever is higher — for prohibited AI practices, more than three times Brazil’s revenue-percentage ceiling. For a multinational technology company, the EU exposure is materially larger. But the Brazilian framework’s coupling with American Convention obligations creates a parallel litigation pathway through the Inter-American HR System that has no direct EU analogue — a risk dimension that doesn’t appear in standard penalty comparisons.

The ANPD as AI Regulator

Brazil’s National Data Protection Authority (ANPD) becomes the primary enforcer of Bill 2338 under the current text, layered onto its existing role administering the Lei Geral de Proteção de Dados (LGPD) since 2020.

1,800+

LGPD investigations opened in 2024

ANPD Annual Report, 2024

R$50M

Maximum penalty per infraction under Bill 2338

PL 2338/2023 substitute text

31

IACtHR advisory opinions issued since 1979

Inter-American Court registry, 2025

2020

Year ANPD became operational under LGPD

Law 13.709/2018

Verify exact figures against ANPD Annual Report before publish.

The ANPD’s enforcement readiness is one of the more substantive questions for operational planners. The agency has grown its specialized technical staff each year since 2020 and has published successive guidance on automated decision-making under existing LGPD Article 20 — guidance that effectively foreshadows the Bill 2338 enforcement posture.

The governance structure under Bill 2338 is not pure ANPD authority. The substitute text establishes a multi-stakeholder framework including a coordination body, sectoral regulators (the Central Bank for financial AI, ANATEL for telecom, ANS for health AI), and civil society representation. This is a deliberate structural choice distinct from the EU AI Office’s more centralized model — and one that creates both flexibility and potential coordination friction.

The Inter-American Human Rights System Connection

Brazil’s AI Bill 2338 is the first Latin American AI framework to operationalize algorithmic discrimination protections in a manner that explicitly invokes obligations under the American Convention on Human Rights, creating potential litigation pathways through the Inter-American Court for affected populations.

The connection runs through three load-bearing channels.

First, the substitute text’s “affected populations” framing — the architectural choice that distinguishes Brazil’s subject-based risk model from EU use-case classification — directly imports the equal-protection logic the Inter-American Court has developed in its discrimination jurisprudence. The Inter-American Court of Human Rights has issued 31 advisory opinions since its establishment in 1979, with OC-29/22 (2022) addressing State obligations regarding asylum-seeker protection and automated processing of personal data.

Brazil’s AI Bill 2338 imports the equal-protection logic of the Inter-American Court’s discrimination jurisprudence into its operational risk classification — making algorithmic harm to affected populations a regulatory category rather than only a litigation theory.

Second, Brazil’s status as a State Party to the American Convention with accepted contentious jurisdiction means that State deployments of AI — and arguably State failures to regulate private AI deployments — fall within the Inter-American Court’s potential reach. A litigant claiming algorithmic discrimination by a Brazilian State actor has, in principle, a pathway from the Inter-American Commission to the Court that does not exist in the European Convention system in the same operational form for similar AI questions.

Third, the substitute text references the right to “non-discriminatory algorithmic treatment” — a phrasing without direct EU AI Act analogue but consistent with Article 24 of the American Convention (equal protection) as the Inter-American Court has interpreted it.

For technology companies, this matters in a specific way: complying with Bill 2338’s letter is necessary but may not be sufficient where State actors are involved, because Inter-American treaty body practice can impose obligations the domestic statute doesn’t explicitly enumerate.

LATAM Comparative Position

Bill 2338 sits within a regional pattern but is not representative of it. As of May 2026, three Latin American countries — Brazil, Mexico, and Chile — have AI-specific regulatory frameworks at advanced legislative stages, while Argentina and Colombia operate primarily through general data protection laws (OECD AI Policy Observatory, 2026).MXCACOVEPEBOBRPYCLARUY

AI-specific frameworkGeneral data protectionNo comprehensive frameworkAI regulatory maturity across Latin America (May 2026). Source: OECD AI Policy Observatory; author analysis.

| Country | AI-specific framework | Year | Regulator | Status |

|---|---|---|---|---|

| Brazil | AI Bill 2338 (PL 2338/2023) | Pending | ANPD | Senate-approved 12/2024; Chamber pending |

| Mexico | National AI Agenda + sectoral | 2023-ongoing | INAI + sector regulators | Strategic framework, not single statute |

| Chile | Proyecto de Ley sobre IA | Pending | TBD | Congressional consideration |

| Argentina | General data protection (Law 25.326) | 2000 | AAIP | Reform pending; AI not codified |

| Colombia | National AI Policy (CONPES 3975) | 2019 | DNP, MinTIC | Strategy document, not statute |

| Uruguay | Personal Data Protection + AI strategy | 2008 / 2020 | URCDP | Strong DP, soft AI policy |

Source: OECD AI Policy Observatory; author analysis.

The regional pattern matters operationally for any organization deploying AI across multiple LATAM jurisdictions. A compliance posture that satisfies Bill 2338 does not automatically satisfy Mexico’s National AI Agenda (where the sectoral structure means financial AI compliance routes through CNBV, while health AI routes through COFEPRIS), nor does it address Argentina’s data protection reform, which is in active legislative motion as of May 2026.

Operational Implications for Companies

The practical implications differ sharply by company type:

Multinational technology companies operating in Brazil — should treat the December 10, 2024 Senate approval as the planning trigger. Compliance architecture should be drafted now against the substitute text, with provisions flagged that may shift in Chamber review (particularly biometric surveillance carve-outs and foundation model transparency obligations).

Brazilian startups developing AI products — the operational compliance burden is most significant for systems landing in the high-risk tier. Founders building credit-scoring, hiring, or critical-infrastructure AI should be designing impact-assessment processes now; the documentation burden is meaningful and retrofitting is more expensive than designing it in.

Foreign companies serving Brazilian users without local establishment — extraterritorial scope under Bill 2338, layered onto existing LGPD reach, means that “we don’t have an office in Brazil” is not a sufficient compliance posture. The relevant question is whether processing affects individuals in Brazil.

Government contractors and State-adjacent deployments — face the Inter-American HR System exposure most acutely. Compliance with Bill 2338 alone may not address treaty-body claims for AI systems deployed by or for State actors.

Working through Brazil AI compliance and the Inter-American HR System layer?

That’s exactly the gap I work in. If your AI deployment in Brazil intersects with treaty-body obligations, I help technology companies and governments translate frameworks like Bill 2338 into operational decisions.Book a 30-min discovery call →

Methodology

This analysis is based on the published text of PL 2338/2023 as of the substitute text approved by the Senate’s Internal Affairs Committee, supplemented by the EU AI Act (Regulation 2024/1689), the American Convention on Human Rights, and Inter-American Court advisory opinions through OC-29/22 (May 2022). Scope is limited to civil and regulatory frameworks; criminal-law applications of AI are not addressed. Comparative analysis with the EU AI Act references provisions in force as of May 2026 and may not reflect subsequent amendments. This page is reviewed quarterly; substantive Bill 2338 amendments or Chamber votes trigger immediate updates.

Frequently Asked Questions

What is the Brazil AI Bill 2338?

Brazil AI Bill 2338 (PL 2338/2023) is a proposed comprehensive framework for artificial intelligence regulation in Brazil. Introduced in the Federal Senate in May 2023 by Senator Rodrigo Pacheco, the bill establishes risk classifications, transparency obligations, and an enforcement structure centered on the ANPD. The substitute text approved by the Senate on December 10, 2024 is now under review in the Chamber of Deputies.

When will Brazil’s AI law take effect?

Bill 2338 has no effective date as of May 2026 because it has not yet been enacted. Following Chamber of Deputies approval (potentially with amendments requiring Senate reconciliation) and presidential signature, the bill’s substantive provisions are expected to take effect on a staged basis similar to the LGPD’s two-year implementation window. Operational planning should not assume effective dates before late 2026 at the earliest.

How does Brazil’s AI regulation compare to the EU AI Act?

Brazil’s Bill 2338 classifies risk by potential harm to affected populations (subject-based), while the EU AI Act classifies risk by use case. Brazil caps penalties at 2% of Brazilian revenue versus the EU AI Act’s 7% of global turnover. Brazil’s framework cross-references the American Convention on Human Rights, creating a treaty-body exposure pathway with no direct EU analogue. The substitute text places primary enforcement with the ANPD, while the EU model uses a more centralized AI Office structure.

Who regulates AI in Brazil?

The National Data Protection Authority (ANPD) becomes the primary AI regulator under Bill 2338’s current text. The governance structure includes sectoral regulators (Central Bank for financial AI, ANATEL for telecom, ANS for health AI) and a coordination body that includes civil society representation.

What are the penalties under Brazil’s AI law?

The substitute text caps penalties at R$50 million per infraction or 2% of revenue earned in Brazil — whichever is lower. This penalty structure deliberately mirrors LGPD Article 52. The Chamber of Deputies review may modify these provisions before final passage.

Does the Inter-American Human Rights System affect Brazilian AI regulation?

Yes — and this is a distinguishing feature of Brazil’s framework. The substitute text imports equal-protection logic from Inter-American Court discrimination jurisprudence, and Brazil’s acceptance of Inter-American Court contentious jurisdiction means State AI deployments may face treaty-body claims independent of domestic statutory compliance.

How does Brazil’s AI Bill 2338 affect non-Brazilian companies?

Bill 2338 applies extraterritorially to AI systems that process data affecting individuals in Brazil, similar to LGPD’s existing extraterritorial scope. A company without Brazilian establishment can still face Bill 2338 obligations if its AI affects Brazilian users — particularly in high-risk categories like credit scoring, hiring, or critical infrastructure.

Have a Brazil AI policy or compliance question that’s outgrown an internal brief?

I work with governments, multilaterals, and technology companies on questions at the intersection of AI regulation and Latin American human rights frameworks. If your deployment in Brazil — or anywhere in LATAM — needs to address both Bill 2338 mechanics and Inter-American treaty body exposure, let’s talk.

Have a Brazil AI policy or compliance question that’s outgrown an internal brief?

I work with governments, multilaterals, and technology companies on questions at the intersection of AI regulation and Latin American human rights frameworks. If your deployment in Brazil — or anywhere in LATAM — needs to address both Bill 2338 mechanics and Inter-American treaty body exposure, let’s talk.

Book a 30-min Discovery Call

For not-yet-ready leads: subscribe to the LATAM AI Policy Briefing for bi-weekly original analysis.

Notes & Caveats

Bill 2338’s final text may differ from the version analyzed here pending Chamber of Deputies action. Statistics are drawn from the ANPD 2024 Annual Report; the 2025 report, when published, will supersede these figures. I have no current consulting engagement with the ANPD, named Senators, or named regulators referenced in this analysis. Specific compliance decisions in Brazil should be validated with qualified Brazilian counsel (OAB-credentialed); compliance decisions touching the Inter-American Human Rights System should additionally involve counsel familiar with treaty-body practice.